Text Generation

fastText

Pennsylvania German

wikilangs

nlp

tokenizer

embeddings

n-gram

markov

wikipedia

feature-extraction

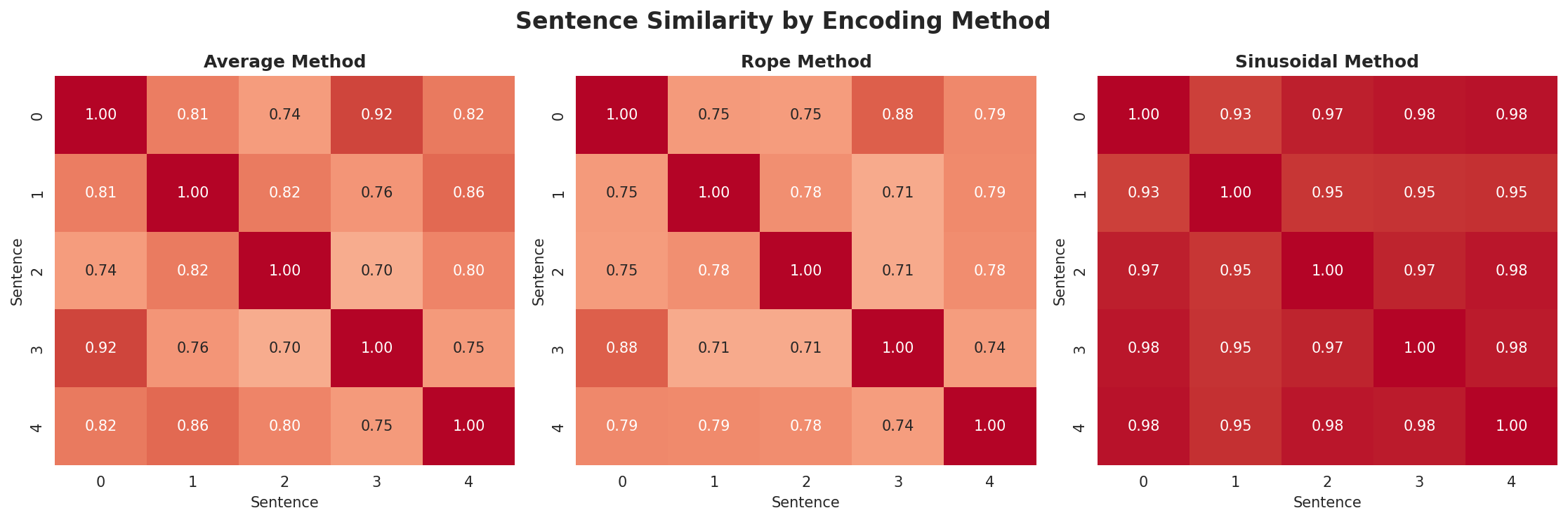

sentence-similarity

tokenization

n-grams

markov-chain

text-mining

babelvec

vocabulous

vocabulary

monolingual

family-germanic_west_continental

Instructions to use wikilangs/pdc with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- fastText

How to use wikilangs/pdc with fastText:

from huggingface_hub import hf_hub_download import fasttext model = fasttext.load_model(hf_hub_download("wikilangs/pdc", "model.bin")) - Notebooks

- Google Colab

- Kaggle

- Xet hash:

- 36f8fb11d3ec8754090fdf0b484c7a266aa97c4661db8fd4193519d42539412f

- Size of remote file:

- 116 kB

- SHA256:

- 3c67e6b3b504b0d567cde7ab7ed41f96ae83086a74d5f0bf55c7e3283c3fcd48

·

Xet efficiently stores Large Files inside Git, intelligently splitting files into unique chunks and accelerating uploads and downloads. More info.