Agentic-R1: Distilled Dual-Strategy Reasoning

The model was presented in the paper Agentic-R1: Distilled Dual-Strategy Reasoning.

Code: https://github.com/StigLidu/DualDistill

Abstract

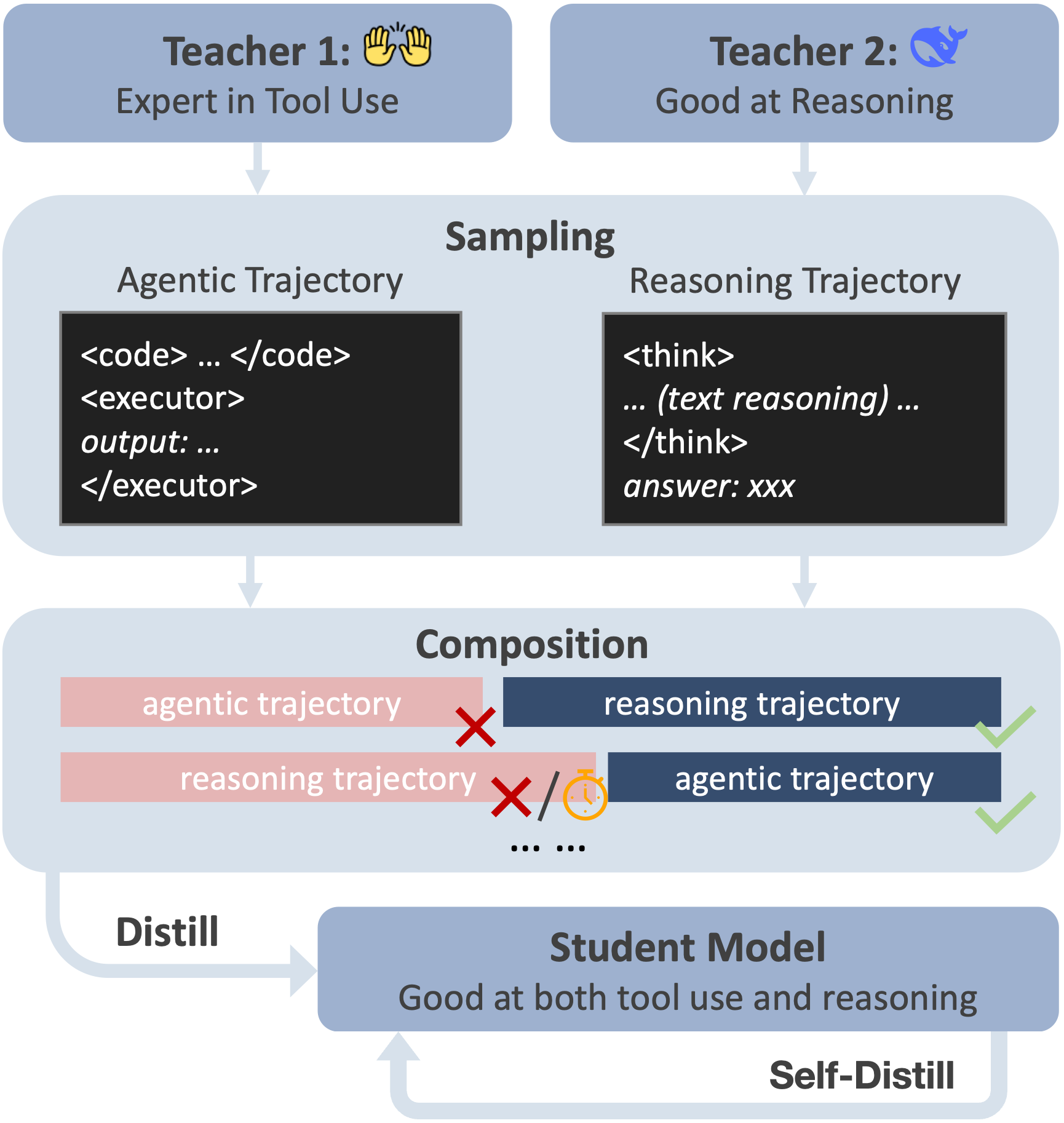

Current long chain-of-thought (long-CoT) models excel at mathematical reasoning but rely on slow and error-prone natural language traces. Tool-augmented agents address arithmetic via code execution, but often falter on complex logical tasks. We introduce a fine-tuning framework, DualDistill, that distills complementary reasoning strategies from multiple teachers into a unified student model. Using this approach, we train Agentic-R1, which dynamically selects the optimal strategy for each query, invoking tools for arithmetic and algorithmic problems, and using text-based reasoning for abstract ones. Our method improves accuracy across a range of tasks, including both computation-intensive and standard benchmarks, demonstrating the effectiveness of multi-strategy distillation in achieving robust and efficient reasoning.

Key Features

- Efficient Training: Integrates tool use into long-chain-of-thought (CoT) reasoning using only 4 × A6000 GPUs

- Unified Reasoning: Fuses heterogeneous reasoning traces from multiple teacher models into a single student model

Overview of DualDistill methodology

Datasets

| Dataset | Description | Link |

|---|---|---|

| Training Set | Complete training dataset with teacher trajectories | 🤗 HuggingFace |

| Test Set | Evaluation benchmarks | dataset/test/ |

Results

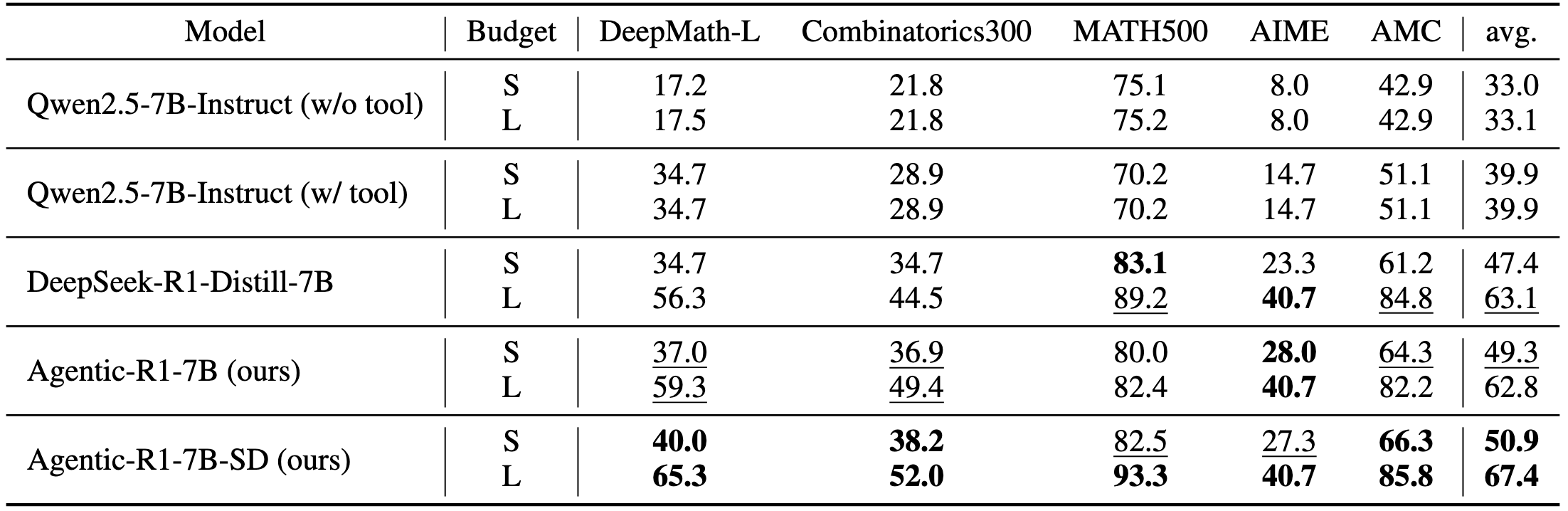

- Agentic-R1 demonstrates significant performance gains on DeepMath-L and Combinatorics300, where both complex reasoning and tool use are crucial for success.

- Agentic-R1-SD (Self-Distilled) further enhances performance through our self-distillation approach, consistently outperforming baseline models across nearly all evaluation tasks.

Quick Start

Installation

Clone the repository:

git clone https://github.com/StigLidu/DualDistill.git cd DualDistillCreate environment (optional but recommended):

conda create -n dualdistill python=3.11 conda activate dualdistillInstall dependencies:

pip install -r requirements.txt pip install flash-attn --no-build-isolation

Inference Server and Evaluation

To run inference and evaluation using the provided scripts:

Start inference server:

bash script/eval_script/start_inference_server.sh [model_path] [display_name] [port]Run Evaluation:

bash script/eval_script/eval_remote_server.sh \ [url] [display_name] [data_path] [code_mode] [max_token]Example:

bash script/eval_script/eval_remote_server.sh \ "http://localhost:8080/v1" "agentic-r1" "dataset/test/math.json" "true" "4096"

Trained Models

| Model | Description | HuggingFace Link |

|---|---|---|

| Agentic-R1-7B | Base model with teacher distillation | 🤗 Download |

| Agentic-R1-7B-SD | Enhanced model with self-distillation | 🤗 Download |

⚠️ Important Notes

- Code Execution Safety: The evaluation scripts execute model-generated code locally. Only use trusted models before execution.

- Inference Config: If you are using vLLM (a recent version) and encounter an error regarding the maximum context length. You may need to modify the

model_max_lengthintokenizer_config.json. - Self-Distillation Warning: The self-distillation step requires sampling many trajectories and can be time-consuming.

License

This project is licensed under the MIT License - see the LICENSE file for details.

Acknowledgments

We thank the following open-source projects for their foundational contributions:

- OpenHands - Agent framework

- DeepMath-103K - Mathematical reasoning dataset

- vLLM - High-performance inference engine

Contact

For questions or support, please contact:

- Weihua Du: [email protected]

Citation

If you find our work useful, please consider citing:

@article{du2025agentic,

title={Agentic-R1: Distilled Dual-Strategy Reasoning},

author={Du, Weihua and Aggarwal, Pranjal and Welleck, Sean and Yang, Yiming},

journal={arXiv preprint arXiv:2507.05707},

year={2025}

}

⭐ Star us on GitHub if this project helped you!

- Downloads last month

- 7